Modeling Social Interactions

Published in CoRL-2021, NeurIPS-2021 Cooperative AI Workshop

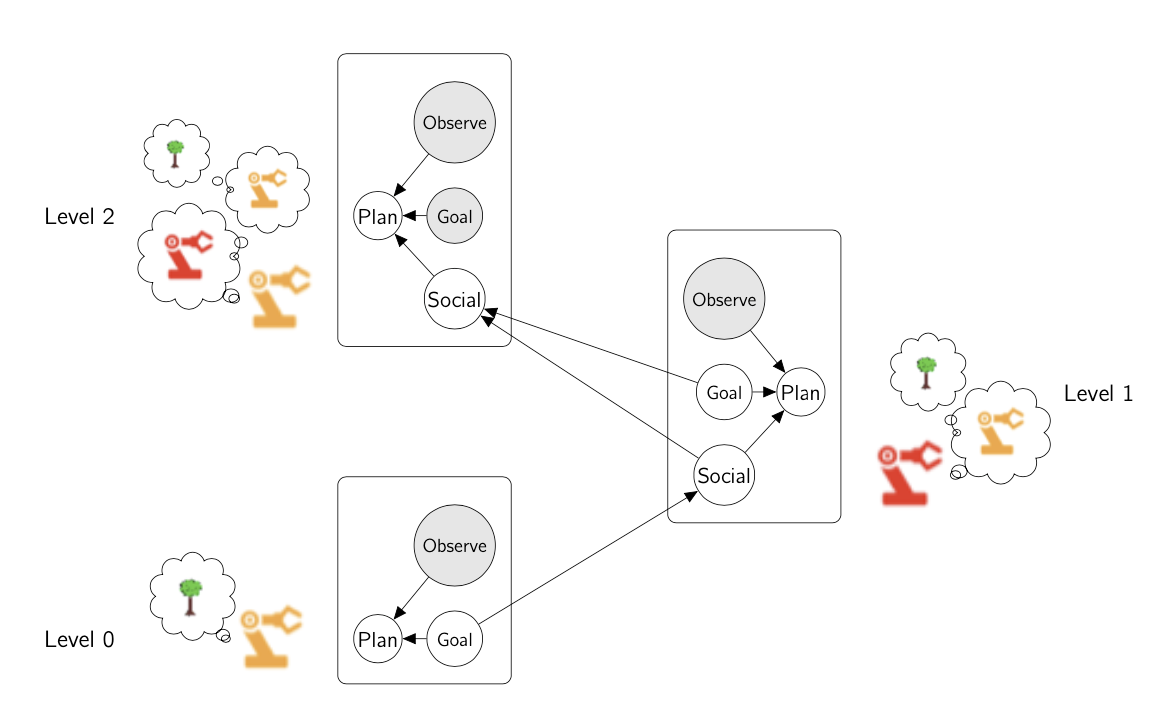

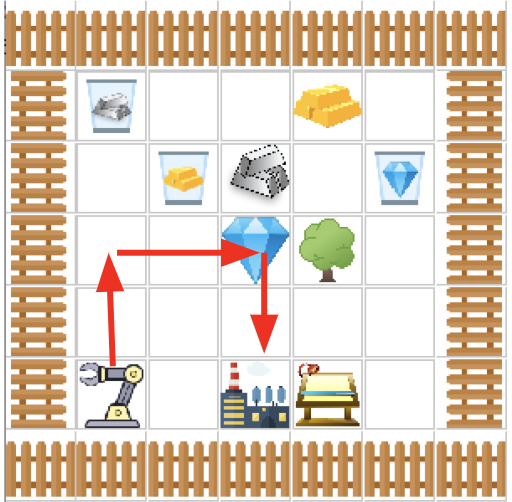

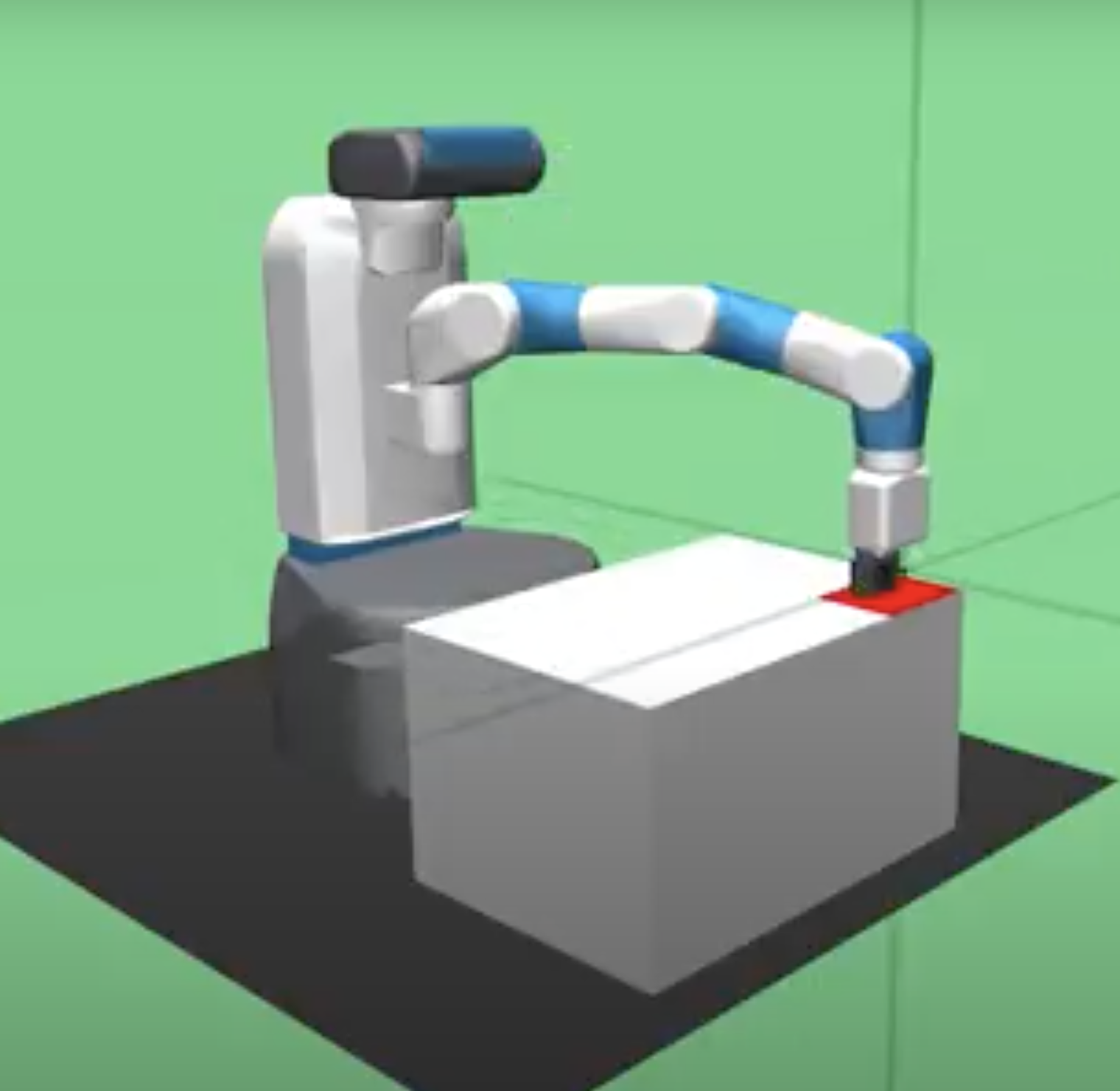

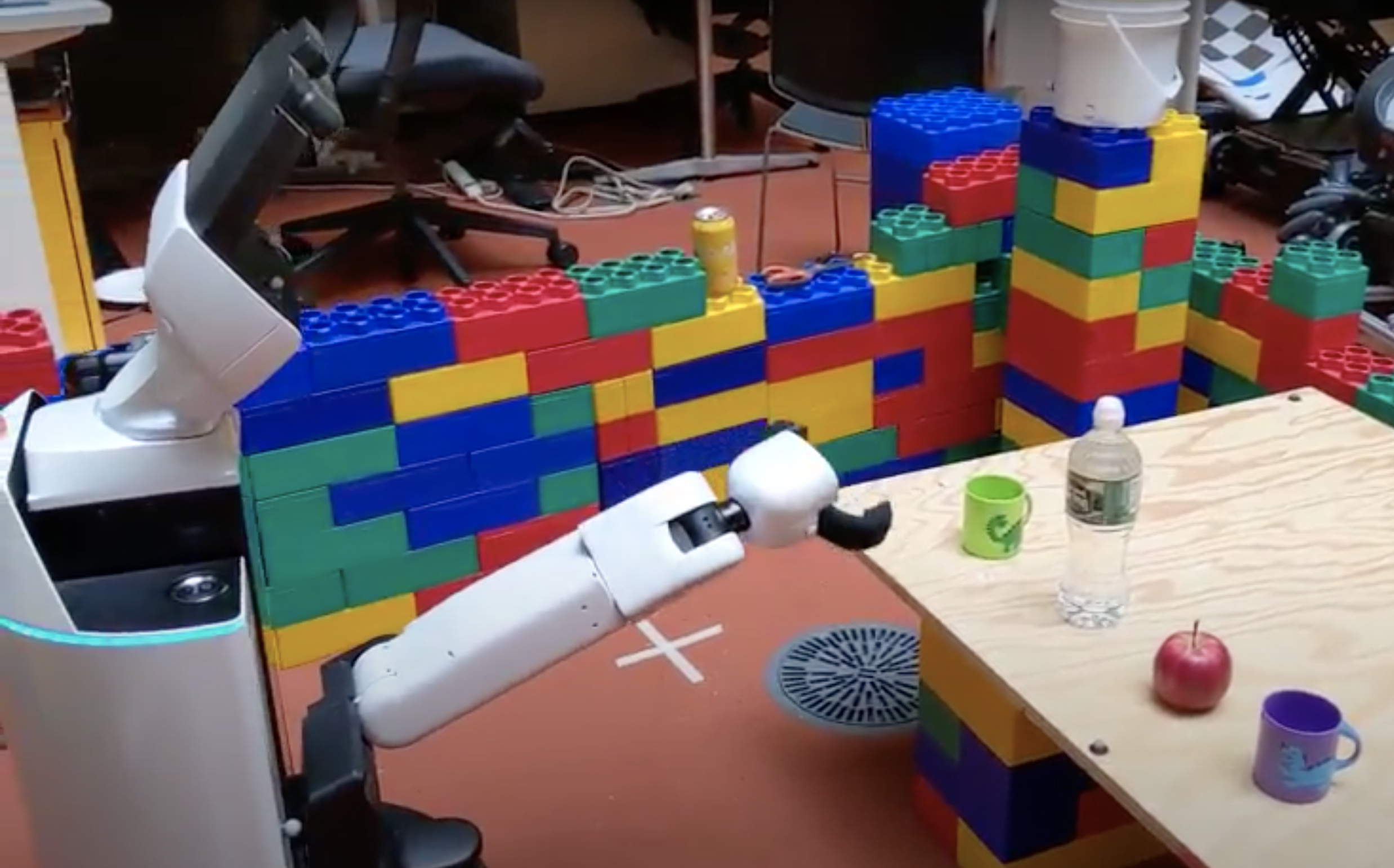

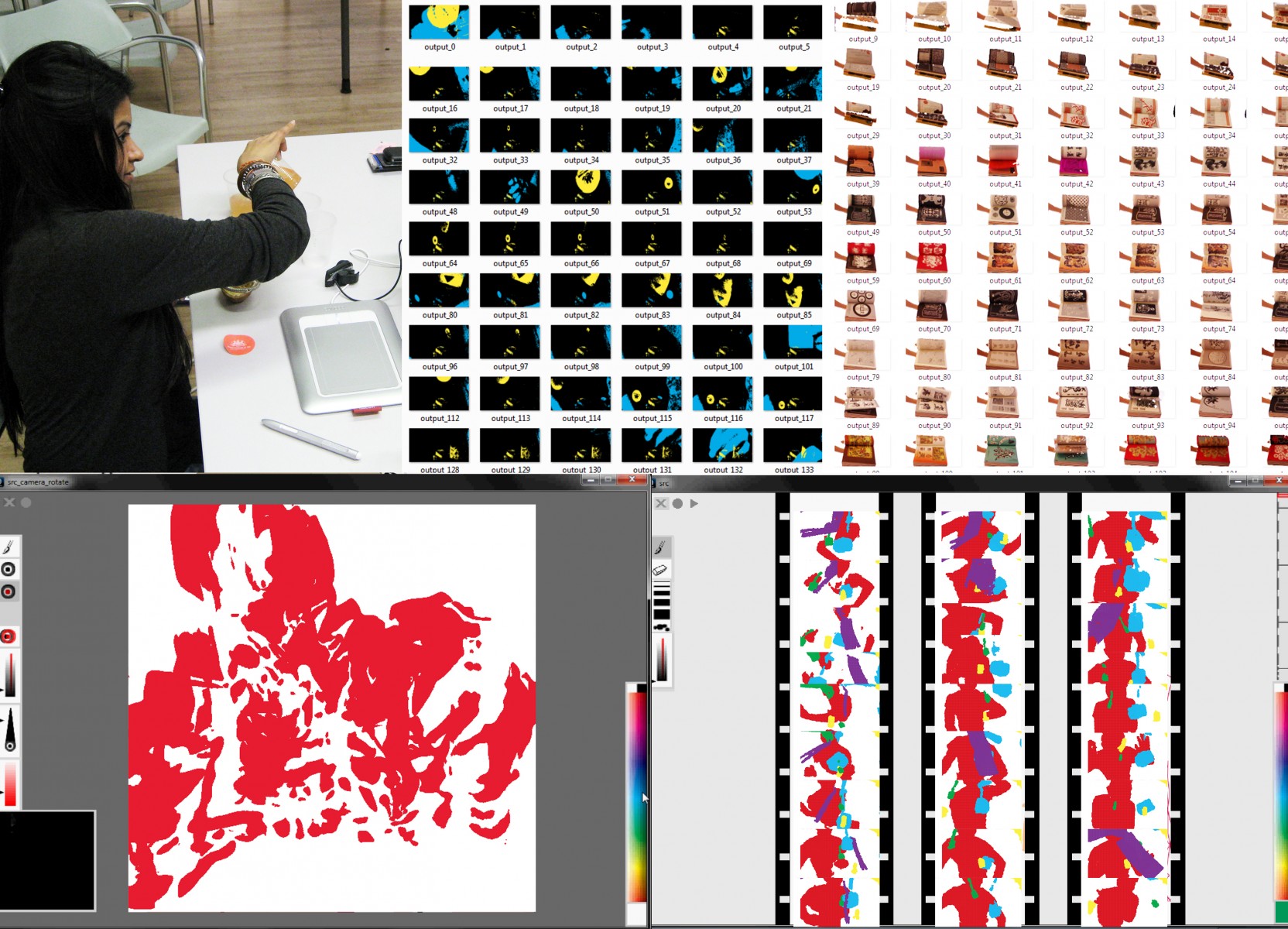

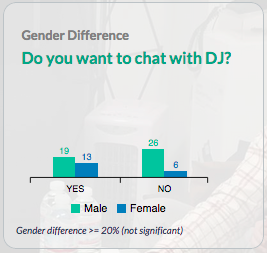

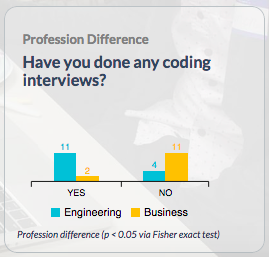

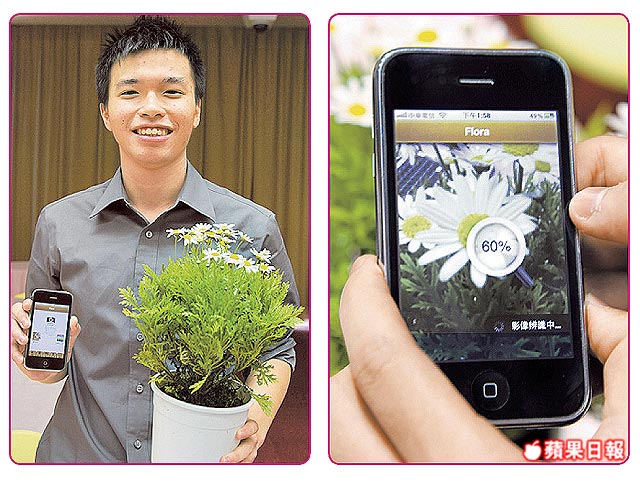

Robots must interact socially with humans. We present a computational framework that models social interactions as recursive Markov Decision Process (MDP). This formulation allows machines to understand what it means to help or hinder one another. Enabling robots to exhibit social skills could lead to smoother and more positive human-robot interactions. For instance, a more caring assistive robot. The model may also enable scientists to measure social interactions quantitatively, which could help scientists to study social impairements.